ViZARTS SUMMIT 2020

Shaping the Future of Filmmaking and Storytelling - by sharing Experiences

25-26th NOVEMBER 2020

Introduction

The second edition of the ViZARTS Summit was completely virtual and was live-streamed from our studio in the SMILE Lab at Medialogy, Aalborg University Copenhagen.

ViZARTS is funded by Nordisk Film Fonden, Samsung and Aalborg University and since its inception two years ago, we have focused on bringing filmmakers and techies together to collaborate and to explore Virtual Production (VP) tools and Real-Time film-making technologies.

We are currently at a pivotal point in history where more and more companies in the film industry are moving towards the new VP real-time pipelines. As COVID_19 continue to introduce further complications when it comes to producing films, VP may also become a competitive advantage for content creators to use instead.

At this year’s ViZARTS Summit, filmmakers and techies shared their experiences of how they embrace the new possibilities. Professionals and upcoming filmmaker talent had enlightening discussions, and all participants at the Summit got loads of inspiration live-streamed conveniently to their comfy chairs at home.

Evening One - Wednesday 25.11.20

The second annual ViZARTS Summit was opened by ViZARTS founder and associate professor Henrik Schønau Fog from the Medialogy education at Aalborg University, Copenhagen. We were then welcomed from the ViZARTS ‘SouthHaven Studio’ in the SMILE Lab by moderator Alex Lehman, who is a Copenhagen-based actor, specializing in motion capture performances and voice-over for videos and animation.

See the whole introduction here: Youtube

Live demo of Rokoko Smartgloves

Using their inertial measurement system ROKOKO has worked towards creating a mobile system that is fully compatible with their suits.

Developing the gloves separately, so that the gloves can work as stand-alone also opens up for digital puppeteering, by taking the animation data and re-targeting the data in Blender or Maya.

“With crisis comes opportunity”: Matias highlights that with the pandemic roaring and people having to stay home, he sees people being much more creative with their suits and gloves – creating new pipelines and creative workflows.

He further reflects on the crisis and its impact on the hardware industry stating that the focus has shifted towards focusing on how one can create smaller, more agile teams and easy to use tools that accommodate this new workflow.

Where traditional animation is a linear process consisting of pre-production, production and post production, Rokoko has with their studio focused on creating non-linear workflows that accommodate game engines and live collaboration to change the ways that filmmakers are creative together.

See Matias’ demo and Q&A here: Youtube

A Director Jumping in at the Deep End

In the second session, danish director Rasmus Kloster Bro presented his study on video sketches as a fundamental tool for creating feature films – working with video and cinematic language at a stage where you traditionally would only be writing. Rasmus believes that the cinematic language opens up much more creative doors for filmmakers as it allows them to tell their stories and communicate their cinematic visions in ways that text do not allow.

Currently working on a story for a feature film – A medical thriller – Rasmus wanted to explore filming underwater and as he says “directing crabs” – which he never tried before…

As they proceeded their filming process, using different rigs in the water one of the main findings was that light acts differently under water. Everything that is far away from you or that is not directly hit by light will turn a greenish or blue-ish colour. Wanting to explore this underwater concept of the relationship between colour and depth on the surface Rasmus used a stereoscopic camera, finding out that the material coming from that camera unfortunately was too unreliable.

Rasmus believes that not having a pre-production tradition in danish film production is limiting our collective expression. Being asked how other directors can start dipping their toes into VP Rasmus answers “Open your mind and see what emotional feedback you get, because writing and talking is not everything.”

See Rasmus’ video and Q&A here: Youtube

From Actors to Animated Characters

The project was a prototype where Mark investigated how one can create cut-scenes in Unreal Engine using VP.

Mark believes in motion capture as an acting tool, and trusts that – if done right – the viewer will forget that what they are seeing is an animated character and rather a living, breathing human. He continues to state that in Denmark where budgets are low and productions are shorter, actors are usually only called in to do a voice-over – wasting the strengths and nuances that an actor can provide to a game or animated film production.

Mark highlights the power of real-time and VP for capturing the unplanned, and more human moments that one would not be able to achieve by traditional animation.

“Dream big and think small” – Is his advice to all new content creators who want to start working with VP.

Mark closes his session stating “Fail fast and fail often, because the more you fail, the more you learn”. Filmmakers tend to think of VP as a shiny new gem, and a new way we can make films. He questions this assumption and states that this is not reality (yet) – VP is a tool in which one can potentially make films. But for the time being, “…use it as a pre-viz tool, and then go out and film your film traditionally. In Denmark our budgets are low and we can’t afford to make mistakes – that’s why VP is the future.”, Mark concludes.

See his video and Q&A here: Youtube

How can we bring digital characters and environments to life on set with Performance FX?

The session four was with Puppeteer Robin Guiver. In his session, Robin introduced the concept of “Performance FX” and states that incorporating puppetry in film production is first of all about finding a puppeteer or an animator to get the references correct.

“Puppetry is acting with a little more distance” he states and says that it is much more instinctive than what people would think.

Countries are in lock-down right now, and the film industry is continuing on strict health and safety regulations which have drastically changed the way many work.

In terms of puppeteering technologies, it is important to understand, he highlights, that one can take anything – a roll of tape, a piece of paper with two dots on them etc. it is not about having pretty dolls.. – it is about interaction and feeling.

Robert predicts that puppeteering and Performance FX is going to be used more frequently in filmmaking because the tools are becoming more and more easily accessible.

See much more about Robin’s ideas of how to create and use Performance FX / Effects for films and animations here: Youtube

Exploring the power of Virtual Production

Session five was with Iris M. Schmidt from Virasabi, who specialise in Augmented Reality, Virtual reality, mixed reality and since recently also in Virtual Production. The studio just got their new LED wall up and running and in this talk, Iris casts some light on what possibilities it brings.

She talks about how the most prominent use for the screen – at least for Virsabi – has been using their LED wall for the commercial industries.

Being in the midst of a pandemic also means that you are not able to travel across the world to shoot your productions. But having this large screen allows you to create any environment in 3D and then just like that you are there.

Iris elaborates, that the technology slowly democratizes the industry and the types of films and stories we can create, since you are able to manipulate time and space in a way that you never have before.

See her talk and conversation with Alex here: Youtube

Exploring Virtual Production on the Mandalorian

The last session of the day was also the “TADAAA!” surprise!

We had the honor of Zoom’ing Landis Fields and Kenny DiGiordano in from the US into our SouthHaven studio to talk with Alex about Virtual Production on Disney’s Star Wars The Mandalorian.

The Mandalorian is an American television series created by Jon Favreau for Disney+. It first premiered in November 2019 and is the first live-action series of that Star Wars franchise.

The opening questions of the fireside chat with the two veterans started on conversing on VP and this new way of working.

“Embrace the new technology – it is coming in with full force – the lines of traditional filmmaking are blurred out”, says Kenny.

Landis adds: “This is a huge turning point for storytelling – It allows everyone to come together in a super collaborative way. You get to see folks around the table talking to each other.”

When looking at the VP production of the Mandalorian, many filmmakers are frightened by the costs of the huge LED screen, the sets etc. – and they don’t think that such a thing is realistic on Danish budgets. When asked about what advice could be given for Danish filmmakers on limited budgets, Landis anwers:

“At the end of the day – Virtual Production is another way of everybody failing as fast as they can. Go in there and pre-viz, figure out what works, and what does not.

A lot of people get enamoured in what the big guys are doing – but at the end of the day, I think what is really powerful is just going in there and doing it.

Many traditional film makers may think that it is just a bunch of kids who make video games behind computers, but that is not the case. Don’t get caught up in the technicalities. Use what you have access to.”

Being a traditional filmmaker one can quickly become overwhelmed by the new technology and the way of working with them.

To this Kenny answers “I can’t stress enough, the importance of pre-production. Doing that, and spending time on it, is where you are able to save time and money because when you step onto the set everybody knows that they are doing and what the shot looks like”

Landis continues:

“Have a holistic understanding of what you are trying to achieve:

Understand how a camera works, understand what real-time is. What does it take to make a film? Think like a production designer, a DP, a director, a set decorator… You have to think like all these people, so having a solid understanding of all of these things will make you more valuable.”

It is not a surprise that when we discuss and work with VP at ViZARTS, there are many skeptics out there, who do not believe in VP and do not think that there is any future in using these tools. Some state that it is just a phase while others refuse to even talking about it.

To this observation Kenny answers:

“VP has been used for a long time already. Things we have made in the past have also been VP like the ARWall etc. I don’t see it going anywhere, I just see it getting better. Everybody will have a Mandalorian stage. In the meantime, everybody is just working to get there”

Landis supplements, talking about the core of what makes Virtual Production useful:

“VP is that you are using the creative decisions for the final image. It is not just concept art that is thrown away. We are using everything. You almost feel reckless when you don’t have these tools that we are using after you have experienced them. You feel that you are making ill decisions on the set. You get addicted to collaborating, and if you move something, I can see it.”

Tech aside, one of the biggest questions on the minds of many: Is this new pipeline going to introduce unemployment to some of the traditional roles on a set?

“I have not seen a drop – if anything it is just making the production more efficient. You still have all the people you used to have. If anything you now also have all the technology people running everything, changing things on the fly.

It is more inspiring – more people wanting to come on board”, Kenny reassured the worried online guests.

“We use the same things, but use them differently. We approach it much more efficiently”, Landis agreed.

See how the Mandalorian is produced with state-of-the-art VP tools and pipelines, and listen in on the veterans’ conversation with Alex here: Youtube

The ViZARTS surprise guests concluded the first evening of the ViZARTS summit, and many participants met in the virtual ViZARTS Bar at gather.town to continue the conversations until late.

You can watch the full recorded live-stream here:

Full Evening One Youtube video: https://youtu.be/NLq7LJYkNeQ

Evening Two - Thursday. 26.11.20

Virtual Cinematography from Home

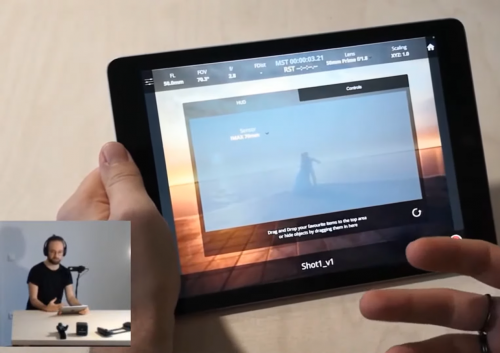

The second evening of the summit began with a demo by Johannes Wilke from GlassBox technologies, showcasing their high-quality virtual camera product “Dragonfly 2.0”

Glassbox was founded in 2016, with the vision of creating a piece of technology that transforms digital content creation, by leveraging the latest in frontier technologies such as game engines, VFX etc.

“Since last year GlassBox has worked hard on launching new products, such as Live Client – a software for importing facial tracking data into UnReal, and BeeHive which is our data managing tool, which you will need if you work with Dragonfly on a larger scale project”, Johannes explained.

Due to Covid_19, the interest in having products like the aforementioned is suddenly more relevant than before, making the products that GlassBox develops in high demand.

“You are able to work with the software remotely, but do consider having one machine that runs the scene and then remote machines that make edits etc”, he continues.

To use DragonFly, Johannes elaborates that you don’t even need a Vive tracker, just having an iPad is enough to get started.

“The app is not available for Android now, but if one is interested you can always throw us an email”, he also explains.

To better understand what DragonFly is and what it can do, Johannes mentions that the software is currently resembling a camera, that you have in your virtual scene – and if you are in the need to use it Live on Set, you can of course.

“One thing that I sometimes say when people ask us this – I always reply with another question “Do you really need to use it Live? One of the benefits of virtual production, as I see it, is that you can work with everything sequentially until you have the perfect shot”.

One of the main concerns that one might have when watching the demo is if the tablet is as good as an actual camera, to which Johannes says that “The artistic side of things, are designed to be as close to what they already know as cinematographers”. He further elaborates that the system is indeed fully customizable to fit the needs of the user.

See the Demo of DragonFly 2.0 and the conversation with Johannes and Alex here: Youtube

Marionette: XR Motion Capture

The second session of the day was with Tommy Thore Ipsen, CEO and creative director at Marionette XR.

Marionette XR is a company focusing on creating motion capture in virtual reality, allowing you to be surrounded by the virtual world while creating your own motion captured movements – for example the upper body.

“You can of course not record motion capture as you would normally do, using a suit but you can work with it in layers,” Tommy said.

Using the benefits of machine learning, the company aims at creating a motion capture set-up that uses as few trackers as possible.

Tommy was also inviting the summit attendees to participate in a betatest of the system – you can reach him at: thore[a]marionettexr.com

See Tommys demonstration and Q&A here: Youtube

Last Days of Snow

The next session was with Jonathan Nielssen from Loeding showcasing their game “Last Days of Snow”

Working with Unreal engine, allowed the team to experiment with characters, the setting, and really working with multiple layers at the same time.

“This is also quite interesting, when it comes to world building. It is like game play which feeds into the story and the story feeds into the gameplay again”,

Jonathan elaborates: “Working on a project like this compared to film, we have to work hard for a long time before actually seeing the output. But once you are there, you are free to do whatever you want, because you have the world. From there you can scale things, or even film the actors after recording the motion capture. The sky’s the limit.”

Want to learn this? “Online learning, and Googling is the way forward!” – This is Jonathan’s advice to curious filmmakers or game developers, who have never worked with VP before. He emphasizes that it is actually just to download Unreal Engine and get started: “I don’t think there is any other way”, he says.

What fundamentally fuels Jonathan’s work is his desire to merge the competencies in film with game development. “There are so many things that games can learn from film, in regards to the visual language of how you cut, edit and so on”

See Jonathan’s presentation and the Q&A session with Alex here: Youtube

The Future of Holographic Flat Screens

The fourth session was about the future of holographic flat screens with Steen Iversen, Clas Dyrholm and Peter Simonsen from Realfiction.

“A holographic display is like a window. Through that you can see everything – naturally in 3 Dimensions”, Clas stated. The Project ECHO aims at creating the world’s first holographic television, where everything has a true stereoscopic depth.

The ECHO project is still in the R&D phase, but when it will be released, it will include hand- and eye-tracking for controlling the TV, as well as optimal use for up to five viewers at the same time. Streaming will also be a feature.

It is created for both the consumer and professionals, as RealFiction expect that “Over the next 10 years we will have a huge wave of 3D content coming into the world. We will not only see it in computer games and film production. We will also see it in Power Points…”, Peter Simonsen stated.

The project focuses on the pixel technology that makes the holographic aspect possible. To make this as precise and stable as possible other aspects of the screen is being put together by innovation happening in other industries.

What fuels Realfiction is to make a screen that can immerse people into different kinds of content through the ECHO project, be it a Skype call, a game or a movie and change the way we interact with it.

In the discussion afterwards, the attendees were obviously eager to know, when they could have their own Holographic TV.

See the inspiring video and the informative Q&A session here: Youtube

Motion Control, Live-vis and Virtual Production

For the next session of the evening, Romain Bourzeix from Spline visited us to talk about motion control, Live-Viz and virtual production in France.

Romain has a background in the VFX industry and has been working on film for the last decade.

Spline was founded in 2017 and now has a 300 square meter studio with their newest tech, including their motion control rig, camera and software.

Romain explains Spline’s services as follows: “Spline develops and provides solutions for the constantly evolving needs of video production” and this is all also ready to be used for the film production industry.

What drives Spline is to come up with these new tools “to create a solution where we can bring something on set to be present, when most of the problems will come in post production. [It is important] to be prepared and to be forced to have some preparation concerning the effects, because motion control is when you have specific effects to do, and so the motion control is a [complicated] workflow and a big toy… And having big toys on set… Guys love big toys and they have to respect the one who is controlling it”.

Spline, like RealFiction, also take advantage of the innovation and state of the art technology created in other industries and merge them together to create something new. Together with Spline and their knowledge, Romain hopes to create better workflows and communication between the on-set team and the VFX team.

See the visual presentation and Q&A session with Romain here: Youtube

Virtual Production for Indie Filmmakers?

The last guests at the ViZARTS Summit were two crews who created various Star Wars fan films here in Denmark. This talk took us behind the curtain on how to create these films, and how crews could work if they should make it again, while having access to the new virtual production tools and workflows in their productions.

Shahbaz Sarwar is the director and Peter Rongsted is the VFX supervisor on “Shrouded Destiny – A Star Wars Long Tale”. Jesper Tønnes is the director of “The Last Padawan 2 – A Short Star Wars Story”.

None of the two films had used any virtual productions in their production.

Both films tried to challenge the notion that filmmakers in Denmark can only make social realism, but that “Denmark has the potential for making science fiction” as Shahbaz said. The two directors wanted to challenge the notion both on the production’s difficulty, but also on the crew and cost. When making a Star Wars fan movie you are not allowed to pay anybody and this is what none of them did. They both had teams only consisting of volunteers.

During the fireplace conversation, the topic of Virtual production was also discussed and the crews were asked if they could could see a future of it in Denmark.

Both crews agreed that currently the technology is too expensive and not very accessible for the regular filmmaker and for people making their kind of indie-projects. Though both Shahbaz and Jesper agree that there are a lot of possibilities, there are still challenges: “If the cost of bringing the crew, feeding them, waiting for weather – you know all that kind of stuff, when that is more expensive than going to a virtual set and building the whole set in a computer, and working out that whole system. When that becomes cheaper than going to the location – then you will see people going to the virtual sets.”, Jesper Tønnes explained.

Shahbaz also mentioned that he believes that the problem with the new technology is that you lose the natural feel of the outdoor set – This is a thing you can not get on the indoor set.

None of them have ever used VP, but Tønnes thinks that “just a small screen [LED wall] would be amazing to have. It would ease the workflows”, he suggested.

He also agrees with Shahbaz that big companies have to come forward first and start the industry because they are the ones with the money.

The two teams have some reservations in regards to the Virtual Production pipelines and technologies. Though they ended the conversation by saying that there are two sides to the use of the technology; That one can choose to use it to make the production cheaper or it is an aesthetic choice. As Jesper, the director of “The Last Padawan 2” said: “Virtual Production will have its own feel to it – like musicals have etc. and that is an aesthetic, you choose when making art or producing a movie.”

See the full conversations, trailers and behind the scenes here: Youtube

(Re) Watch the full recorded live-stream here:

Evening Two: https://youtu.be/oAROPM4aqK8

With the last finishing words from the two Star Wars Fan film teams, Alex and Henrik concluded this year’s ViZARTS Summit 2020 by thanking the Nordisk Film Fonden, Samsung, Aalborg University, the steering committee, the speakes, all the participants, the families, friends and everyone involved in the organization of the event, including Amunet Studio, who build and broadcasted the event from the ViZARTS SouthHaven Studio in the SMILE Lab.