VIZARTS SUMMIT 2019

Futures of Storytelling from Sketches to Screens

3-5th December 2019

LOOKING BACK AT THE ViZARTS SUMMIT:

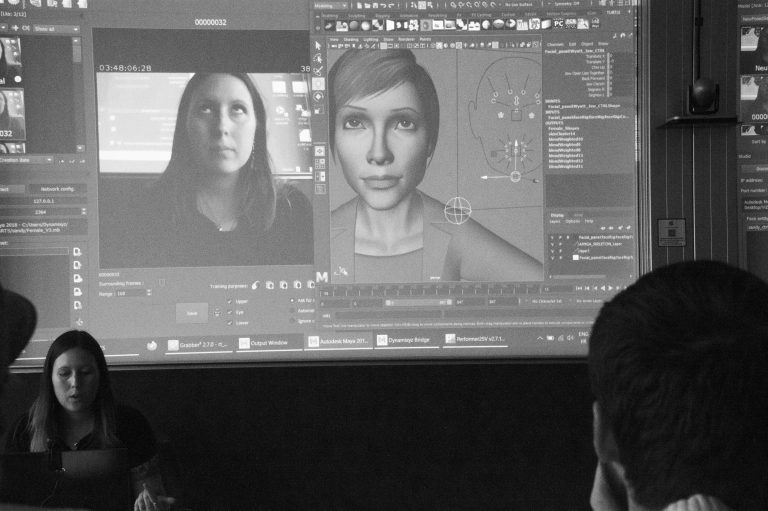

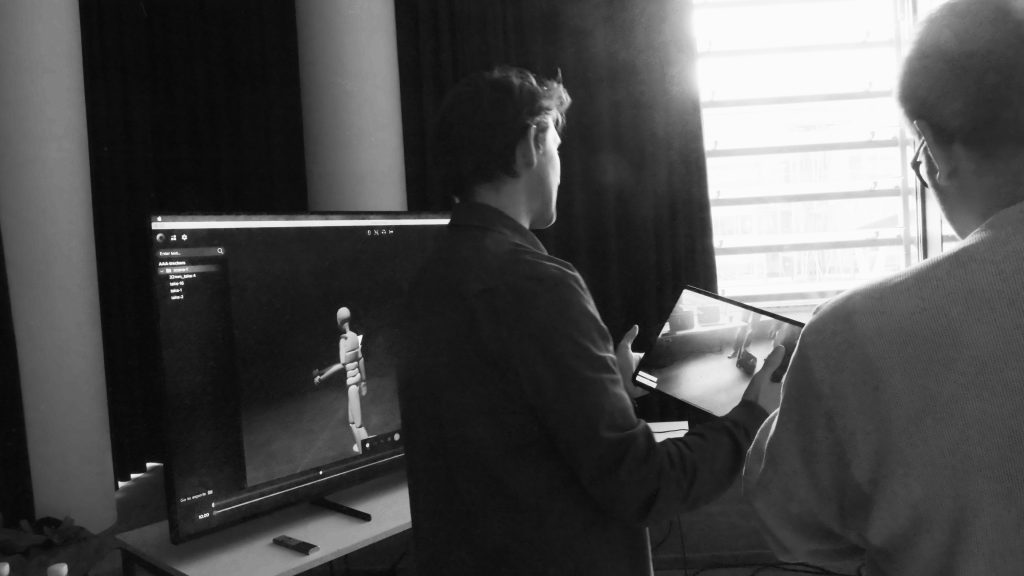

The ViZARTS SUMMIT 2019 was focused on the rapid evolving real-time technologies and how these can be incorporated into tomorrow’s film, tv and animation pipelines.

From motion capture, real-time engines to AI and version control. We covered it all in our 7 alluring Keynote talks, 17 informative hypertalks, 7 comprehensive workshops and 12 inspiring demos.